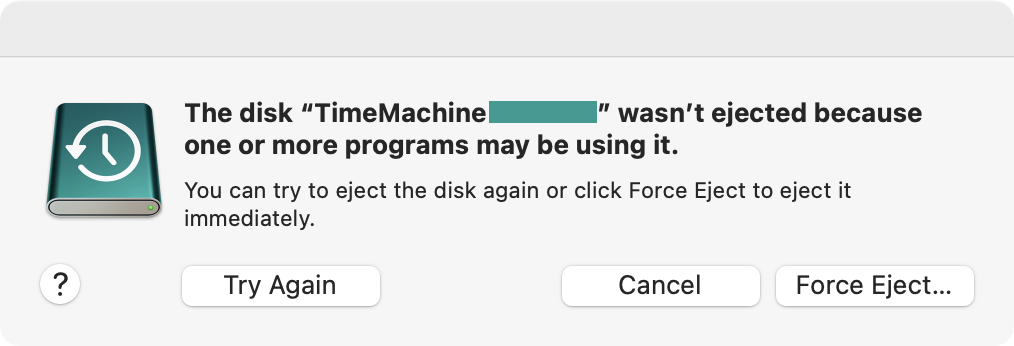

Ever since upgrading to a recent Mac that came with the disk formatted with AFPS, a perennial irritation has been Time Machine. I use a USB hard drive for backups, which of course needs unplugging when I want to take the machine with me somewhere. There are long stretches of time when I don’t even think about this because it works just fine. And then there are the other stretches of time when this has been impossible: clicking the eject button in Finder does nothing for a few ponderous moments and then shows a force eject dialog. (And of course the command line tools and other methods all equally fail.)

I could of course forcibly eject the disk, as the dialog offers. And maybe I would, if it this was just a USB stick I was using to shuffle around some files. But doing this with my backup disk seems rather less clever. This disk I want to unmount in good order.

Unfortunately when this happens, there is no help for it: even closing all applications does not stop the mystery program from using it. So what is the program which is using the disk? The Spotlight indexer, it turns out.

$ sudo lsof +d /Volumes/TimeMachine\ XXXXXX/ COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME mds 1234 root 5r DIR 1,24 160 2 /Volumes/TimeMachine XXXXXX mds 1234 root 6r DIR 1,24 160 2 /Volumes/TimeMachine XXXXXX mds 1234 root 7r DIR 1,24 160 2 /Volumes/TimeMachine XXXXXX

How do you ask this to stop?

Beats me. I have not found any documented, official way of doing so. Not the Spotlight privacy settings, not removing the disk from the list of backup disks in the Time Machine settings, not the combination of those, no conceivable variation of using tmutil on the command line, not a number of other things – nothing. Even killall -HUP mds does not help: obviously the Spotlight service notices, and just respawns the processes.

And this state will persist for hours and days – literally. On one occasion, I wanted but didn’t need the machine with me, so I left it to its own devices out of curiosity. It took over 2 days before ejecting the Time Machine volume worked again.

For a purportedly portable computer, this is… you know… a bit of a showstopper.

So after suffering this issue long enough, I finally tried something stupid the other day – and whaddayaknow, it works:

$ sudo killall -HUP mds ; sudo umount /Volumes/TimeMachine\ XXXXXX/

This will not always work the first time, it may need a repeat or two. But sooner rather than later it does take. Evidently the mds process respawn is not so quick that it wouldn’t leave a window during which the disk can be unmounted properly.

And so I put the following in ~/bin/macos/unmount-despite-mds and made it executable:

#!/bin/bash

if (( $# != 1 )) ; then echo "usage: $0 <mountpoint>" 1>&2 ; exit 1 ; fi

parent_dev=$( stat -f %d "$1"/.. ) || exit $?

while [[ -d $1 ]] && [[ "$( stat -qf %d "$1" )" != $parent_dev ]] && ! umount "$1" ; do

killall -HUP mds

lsof +d "$1"

doneNow I can invoke it at any time from the terminal like so:

$ sudo ~/bin/macos/unmount-despite-mds /Volumes/TimeMachine\ XXXXXX/

What this does is check whether the given path, if it is a mount point, fails to unmount. If so, it sends a signal to terminate the Spotlight indexer processes and immediately retries. In a loop.

Or to put it more colloquially, it machineguns Spotlight until umount can slip in under the suppressing fire and pull the disk out from under it.

This is not a solution. It is the bluntest of instruments. But this is what works. And as far as I have been able to find, this is the only thing that works.

Three cheers for Apple software quality, I guess.

Update: Sending HUP instead of TERM is a better idea. Both cause the mds processes to shut down, but TERM seems to leave Spotlight in a bad state: for some time after, some search results take longer to show up and search result order gets messed up, which painfully disrupts my muscle memory. With HUP I have not observed the same issue. I have added it to all the examples in the article.